Literary Texts as Cognitive Assemblages: The Case of Electronic Literature

In a paper presented at the “Arabic Electronic Literature" conference in Dubai (February, 2018), N. Katherine Hayles considers born digital writing as a cognitive assemblage of technical devices and readerly, interpretive activity.

Preface

This essay is modified from a keynote lecture I gave at the “Arabic Electronic Literature: New Horizons and Global Perspectives” sponsored by the Rochester Institute of Technology Dubai on February 25-27, 2018. The conference organizers, Jonathon Penny from RIT and Reham Hosny from Minia University, arranged for simultaneous English-Arabic translations, enabling all participants to understand and respond to each other’s presentations. I learned that an Arabic group dedicated to electronic literature, The Unity, already exists and has several hundred members. Although it was not clear how active the group is at present, a spokesman was given space in the program to explain its history and agenda. In addition, I experienced work done by Arab participants. I was particularly struck by Mohamed Habibi’s “Vision of Hope.” Habibi, a Saudi scholar and poet, crafted an eloquent poetic video featuring book pages being slowly turned, natural settings photographed with close attention to forms, other images that revealed their similarities to human constructions, and various domestic interiors that gestured toward the love of life and living. Working as an independent artist, he had devoted countless hours to this project simply for the joy of creative expression, which in itself is a gift of hope to those of us who saw it, and to the field of electronic literature.

The major change in this essay from my oral presentation is the substitution of Johannes Heldén’s and Håkan Jonson’s Evolution for John Cayley’s riverIsland, a work about which I have written elsewhere. I want to give space to Evolution because its claim to enact the “Imitation Game” proposed by Alan Turing in 1951 is particularly relevant to the argument I develop below.

Cognitive Assemblages

Imagine you are in a conference room in Dubai. Imagine you have your coffee and are settled into your seat, ready for the presentation to begin. The speaker is using a Powerpoint—no surprise—and you are taking notes on your tablet. You are aware that you, and most of the others in the room, are connected in some way to digital technologies, and yet you, and almost all the others, think of yourself as an autonomous being. You can put down the tablet if you choose; the speaker can turn off her computer and speak from memory. The digital devices are merely conveniences, faster and more flexible ways to outsource human memory that began with the invention of writing. Yet is this really all they are?

Dubai is an interesting location to ask this question, for it is a modern, fast-moving city with freeways, excellent Internet networks, and fountains gushing throughout the area known as the “Silicon Oasis.” Through the carefully watered landscape, however, the desert peeks through, reminding us that this entire city sits on seas of sand, existing only because of dense infrastructures of water purification and delivery systems, electrical grids, traffic control systems, internet servers, routers and cables, sewage disposal plants, and other modern technological systems that have momentarily tamed the desert and enabled you to sip your coffee without having to think about all this for one moment. Your supposed autonomy is an epiphenomenon, an emergent result of all the technological couplings, communications, energy flows, and material affordances that are deeply integrated with computational media, the intelligent control systems that keep everything humming smoothly. Without these devices, the city (and the vast majority of urban centers in the developed world) simply could not exist in their present configurations. Your forgetting this crucial fact testifies to its very pervasiveness and efficiency; you can afford to forget because the affordances will keep going regardless—at least for a time, at least until the next blackout or breakdown.

A more accurate picture, I want to suggest, is not you as an autonomous being but as a component in a cognitive assemblage. Following Gilles Deleuze and Félix Guattari and Bruno Latour (Deleuze and Guattari, 1987; Latour, 2007), I conceptualize an assemblage as a flexible and constantly shifting network that includes human and technical actors, as well as energy flows and other material goods. A cognitive assemblage is a particular kind of network, characterized by the circulation of information, interpretations, and meanings by human and technical cognizers who drop in and out of the network in shifting configurations that enable interpretations and meanings to emerge, circulate, interact and disseminate throughout the network. Cognizers are particularly important in this schema because they make the decisions, selections, and interpretations that give the assemblage flexibility, adaptability, and evolvability. Cognizers direct, use, and interpret the material forces on which the assemblage ultimately depends (Hayles 2017).

This schema employs a definition of cognition crafted to include humans, nonhuman others, and technical devices. As stated in my recent book Unthought: The Power of the Cognitive Nonconscious, “cognition is the process of interpreting information in contexts that connect it with meaning” (Hayles 2017: 22). A corollary is that cognition exists as a spectrum rather than a single capability; plants, for example, are minimally cognitive, whereas humans, other primates, and some mammals are very sophisticated cognizers. It has been traditional since John Searle’s “Chinese Room” thought experiment (Searle 1980) to argue that computers only match patterns with no comprehension of what that means (in his example, no semantic comprehension of Chinese), This view, however, is increasingly untenable as computational systems become more sophisticated, learning and experiencing aspects of the world through diverse sensors and actuators.

Much depends, of course, on how one defines the central terms “interpretation” and “meaning.” Searle’s example is obviously anthropocentric, since it imagines a man—a sophisticated cognizer—sitting in a room with the rule book and other apparatus he employs, obviously “dumb” affordances. The effect is to dumb down the man to the level of the affordances he uses, a painful reduction of his innate cognitive capacities (hence the anthropocentric bias). We can continue to pat ourselves on the back as being much smarter than computers, but in specific domains such as chess, Go and diagnostic expert systems, computers now perform better than humans. Moreover, the drive toward General Artificial Intelligence (GAI), computational systems able to achieve human-level flexibility across many domains, is estimated by experts to be achievable within this century with a 90% probability (Bostrom 2017: 23). Whatever one makes of this prediction, I argue it is time to move past thought experiments such as Searle’s and take seriously the idea that computers are cognizers, manifesting a cognitive spectrum that ranges from minimal for simple programs up to much more sophisticated cognitions in networked systems with complex multilayered programs and high-powered sensors and actuators.

The fantasy of being completely autonomous has a strong hold on the American imagination, ranging from Thoreau’s Walden (Thoreau 1854*)* to Kim Stanley Robinson’s Martian terraforming trilogy (Robinson 1993, 1995, 1997). Yet even Thoreau used the planks and nails of a previous settler and walked into town more often than his notebooks allowed.

In the contemporary world, computational media are pervasive and deeply integrated into the infrastructures on which most of us depend. If all computational media in the US were suddenly to be disabled—say by a cyberattack or a series of high-altitude EMPs—millions would die before order could be restored (if ever). Considered as a species, then, contemporary humans are engaged in symbiotic relations with computational media. The deeper and more pervasive this symbiosis, the more entwined are the fates of the dominant species (us) with our symbionts, computational networks.

In the domain-specific area of writing, different attitudes toward this situation are manifested. Dennis Tenan, in his excellent book Plain Text: The Poetics of Computation (Tenen 2017) argues that writers should strive to maintain complete control over their signifying practices. Emphasizing that writing in digital media proceeds via multiple layers of code, or as he calls it, textual laminates (5), Tenen argues that writers should understand and have control over every level of the interlinked layers of code that underlie screenic inscriptions. Since many commercial software packages such as Adobe employ hidden code layers that writers are legally forbidden even to access, much less change, Tenen passionately advises his readers to avoid these software packages altogether and go with “plain text” (3), open-source software that does not demand compromises or sabotage the writer’s intentions with hidden capitalistic complicities. We may think of Tenen as the Thoreau of digital composition, willing to put up with the inconvenience of not using pdfs and other software packages to maintain his independence and compositional integrity.

Even if a writer chooses to go this route, however, she is still implicated in myriad ways with other infrastructural dependencies on computational networks that come with living in contemporary society in the developed world. So why single out writing as the one area where one takes a stand? Tenen has an answer: compositional practices are cognitive, and therefore of special interest and concern for us as cognitive beings (52). I respect his argument, but I may point out that other practices are also cognitive and so writing in this respect is not necessary unique. Moreover, resistance is not the only tactic on the scene. Other writers are of the opposite persuasion, embracing computational media as in a symbiotic relation to human authors and interested in exploring the possibility space of what can be done when the computer is viewed as a collaborator. For writers like these, new kinds of questions arise.

How is creativity distributed between author and computer? Where does the nexus of control lie, and who (or what) is in control at different points? What kinds of selections/choices does the computational system make, and what selections/choices do the human authors encode? What role does randomness play in the composition? Is the main interest of the artistic project manifested at the screen, or does it lie with the code? These are the questions that I will explore below.

Slot algorithms: Nick Montfort’s “Taroko Gorge”

One way of enlisting the computer as co-author is to create what Christopher Funkhouser calls “slot” algorithms (Funkhouser 2017: 40), with databanks parsed into grammatical functions (nouns, verbs, adjectives, etc.) and a random generator choosing which word to slot into a given poetic line. The method is straightforward but nevertheless can generate interesting results. One such poem is Nick Montfort’s “Taroko Gorge,” written after he had visited the picturesque site in Taiwan.

The vocabulary evokes the beauty of a natural landscape, including nouns such as “slopes,” “coves,” “crags,” and “rocks,” and verbs like “dream,” “dwell,” “sweep” and “stamp.” Montfort posted the source code at his site, and in a playful gesture, Scott Rettberg substituted his own vocabulary and sent Montfort the result.

In Rettberg’s version, “Tokyo Garage,” the generator produced such lines as “zombies contaminate the processor” and “saxophonists endure the cherry blossoms.” Others took up the game, and Montfort’s site now hosts over a dozen variants by others. Stuart Moulthrop (Moulthrop and Grigar 2017) raises important questions about what this technique implies. “The extension of the text into reinstantiation—the reuse of code structures in subsequent work—raises questions about the identity of particular texts. It also brings into focus the larger identity question for electronic literature. Code can do things and have things done to it that conventional writing cannot … Digital media lend themselves to duplication, encapsulation, and appropriation more readily than did earlier media … differences of scale may be … very large indeed. At some point, pronounced variations in degree may become effectively essential. At the heart of this hyperinflation lies the willful use of databases, algorithms, and other formal structures of computing.” (Kindle version 37-38).

Other than the ease with which code can be remixed, what else can we say about the computer as co-creator here? Obviously, the program understands nothing about the semantic content of the vocabulary from which it is selecting words. Nevertheless, it would be a mistake to assert, à la Searle, that the computer only knows how to match patterns in a brute force kind of way. It knows the data structures and the syntax procedures that determine which category of word it selects; it knows the display parameters specified in the code (in the case of “Taroko Gorge,” continuously rolling text displayed in light grey font on green background); it knows the randomizer that determines the word choice; it knows the categories that parse the words into different grammatical functions; and it knows the pace at which the words should scroll down the screen. This is far more detailed and complex than the “rule book” that Searle imagines his surrogate consulting to construct answers to questions in Chinese that come in as strings through the door slot. Using the philosophical touchstones of “beliefs,” “desires” and “intentions” that philosophers like to cite as the necessary prerequisites for something to have agency, we can say that the computer has beliefs (for example, that the screen will respond to the commands it conveys), desires (fulfilling the functions specified by the program), and intentions (it intends to compile/interpret the code and execute the commands and routines specified there). Although the human writes the code (and other humans have constructed the hardware and software essential to the computer’s operation), he is not in control of the lines that scroll across the screen, which are determined by the randomizing function and the program’s processes.

What is the point of such generative programs? I think of John Cage’s aesthetic of “chance operations,” which he saw as a way to escape from the narrow confines of consciousness and open his art to the aleatory forces of the cosmos, at once far greater than the human mind and less predictable in its results (Hayles, 1994). As with generative poetry, a paradox lurks in Cage’s practices: although the parameters of his art projects were chosen randomly, he would go to any length to carry them out precisely. The end results can be seen as collaborations between nonhuman forces and human determination, just as generative poetry is a collaboration between the programmer’s choices and the computer’s randomizing selections along with its procedural operations.

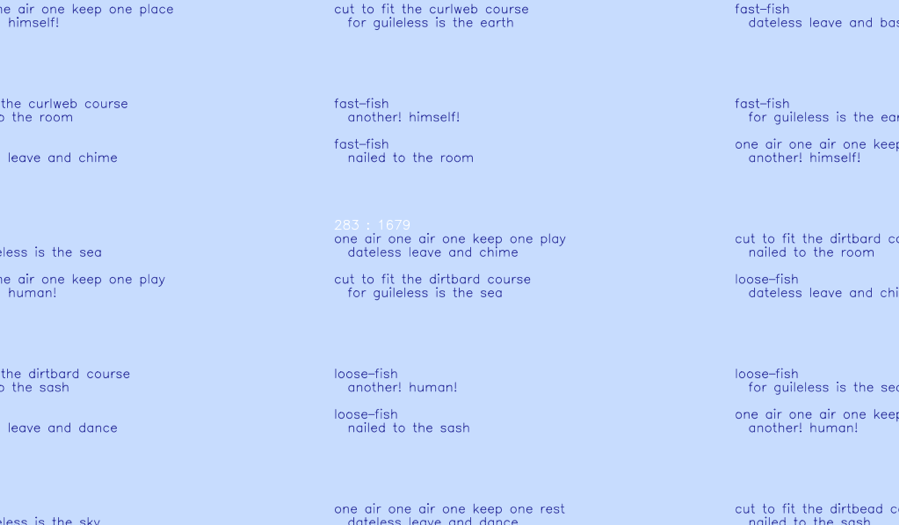

Code Comments as Essay: Sea and Spar Between

My next example is Sea and Spar Between, a collaborative project between prize-winning poet Stephanie Strickland and Nick Montfort. They chose passages from Melville’s Moby Dick to combine with Emily Dickinson’s poems. This intriguing project highlights a number of issues: gender contrasts between the all-male society of The Pequod versus the sequestered life Dickinson led as a near-recluse in Amherst; the sprawling portmanteau nature of Melville’s work versus Dickinson’s tightly constrained aesthetic; the spatial tensions in each work, for example the claustrophobic rendering room aboard the ship versus Dickinson’s famous poem “The Brain is Wider Than the Sky,” and so forth. For this project, much more human selection was used than for “Taroko Gorge,” necessitated by the massive size of Melville’s text and the much smaller, but still significant, corpus of Dickinson’s work.

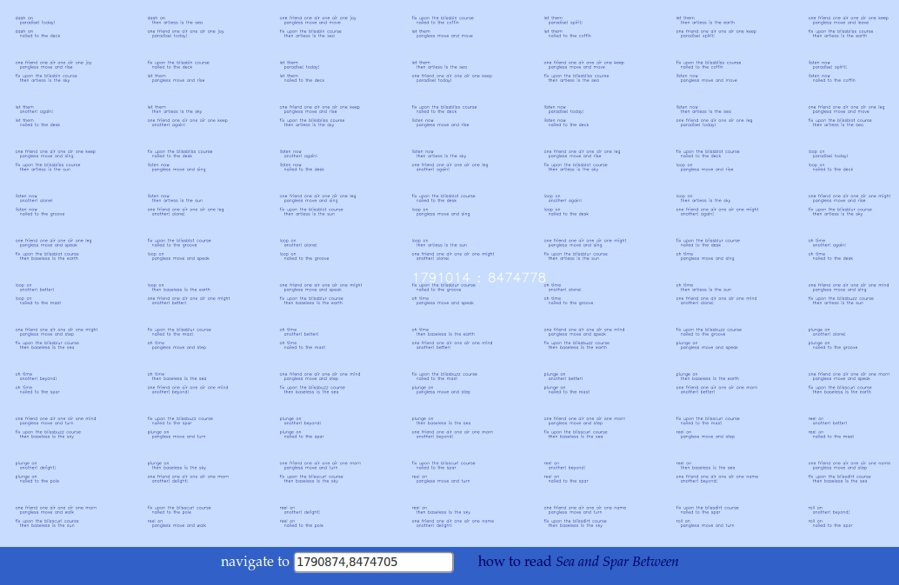

The project displays as an “ocean” on which quatrains appear as couplet pairs. The authors define locations on the display screen through “latitude” and ““longitude” coordinates, both with 14,992,383 positions, resulting in about 225 trillion stanzas, roughly the amount, they estimate, of fish in the sea. The numbers are staggering and indicate that the words displayed on a screen, even when set to the farthest-out zoom position, are only a small portion of the entire conceptual canvas.

The feeling is indeed of being “lost at sea,” accentuated by the extreme sensitivity to cursor movements, resulting in a highly “jittery” feel. It is possible, however, to locate oneself in this sea of words by entering a latitude/longitude position provided in a box at screen bottom. This move will result in the same set of words appearing on screen as were previously displayed at that position; conceptually, then, the canvas pre-exists in its entirety, even though in practice, the very small portion displayed on the screen at a given time is computed “on the fly,” because to keep this enormous canvas in memory all at once would be prohibitive. As Stuart Moulthrop points out (Moulthrop and Grigar 2017), “Stanzas that fall outside the visible range are not constructed” (Kindle version 35). Quoting Strickland, he points out that “the essence of the work is ‘compression,’ drawing on computation to reduce impossibly large numbers to a humanly accessible scale” (Kindle version 35).

The effect is a kind of technological sublime, as the authors note in one of their comments: “at these terms they signal, we believe, an abundance exceeding normal, human scale combined with a dizzying difficulty of orientation.” As Moulthrop observes, “‘Sea and Spar Between’ asks the reader to swim or skim an oceanic expanse of language. We do not build, we browse” (Moulthrop and Grigar 2017: Kindle 36). Montfort and Stickland reinforce the idea of a reader lost at sea in their essay on this work, “Spars of Language Lost at Sea” (Montfort and Strickland 2013). They point out that randomness does not enter into the work until the reader opens it and begins to read: “It is reader of Sea and Spar Between who is deposited randomly in an ocean of stanza each time she returns to the poem. It is you, reader, who are random” (8).

An unusual feature is the authors’ essay within the source code, marked off as comments (that is, non-executable statements). The essay is entitled “cut to fit the toolspun course,” a phrase generated by the program itself. The comments make clear that human judgments played a large role in text selection, whereas more relative computational power was expended on creating the screen display and giving it its characteristic “jerky” movements. The authors comment,

//most of the code in Sea and Spar Between is used to manage the

//interface and to draw the stanzas in the browser’s canvas region. Only

//2609 bytes of the code (about 22%) are actually used to combine text

//fragments and generate lines. The remaining 5654 bytes (about 50%)

//deals with the display of the stanzas and with interactivity.

By contrast, the selection of texts was an analog procedure, intuitively guided by the authors’ aesthetic and literary sensibilities.

//The human/analog element involved jointly selecting small samples of

//words from the authors’ lexicons and inventing a few ways of generating

//lines. We did this not quantitatively, but based on our long acquaintance

//with the distinguishing textual rhythms and rhetorical gestures of Melville

//and Dickinson.

Even so, the template for constructing lines is considerably more complex than “Taroko Gorge.” The authors explain,

//We define seven template lines: three first and four second lines. These

//line templates and the consequences they involve were designed to evoke

//distinctive rhetorical gestures in the source texts, as judged

//intuitively by us, and to foreground Dickinson’s strong use of negation.

The selections include compound words (“kennings,” as the authors call them) with different rules governing how the beginning and ending lines are formed:

//butBeginning and butEnding specify words that begin and end

//one type of line, the butline.

To create the compound words, the computer draws from two compound arrays and then “joins the two arrays and sorts them alphabetically.”

In this project, what does the computer know? It knows the display parameters, how to draw the canvas, how to locate points on this two-dimensional surface, and how to center a user’s request for a given latitude and longitude. It also knows how to count syllables and what parts of words can combine to form compound words. It knows, the authors comment, how “to generate each type of line, assemble stanzas, draw the lattice of stanzas in the browser, and handle input and other events.” That is, it knows when input from the user has been received and it knows what to do in response to a given input. What it does not know, of course, are the semantic meanings of the words and the literary allusions and connotations evoked by specific combinations of phrases and words. Nevertheless, the subtlety and scope of the computer’s beliefs and intentions far exceed the stereotyped “rule book” of Searle’s thought experiment.

In reflecting on the larger significance of this collaboration, the (human) authors outline what they see as the user’s involvement as responder and critic.

//Our final claim: the most useful critique

//is a new constitution of elements. On one level, a reconfiguration of a

//source code file to add comments—by the original creator or by a critic—//accomplishes this task. But in another, and likely more novel, way,

//computational poetics and the code developed out of its practice

//produce a widely distributed new constitution.

To the extent that the “new constitution” could not be implemented without the computer’s knowledge, intentions and beliefs, the computer becomes not merely a device to display the results of human creativity but a collaborator in the project.

Computers as Literary Influences

One branch of literary criticism, somewhat old-fashioned now, is the “influence study,” typically the influence of one writer on another. Harold Bloom made much of this dynamic in his classic study, The Anxiety of Influence: A Theory of Poetry (1973), in which he argued that strong poets struggle against the influence of their precursors to secure their place in the literary canon. For writers creating digital literature, software platforms (and underlying hardware configurations) exert a similar insistent pressure, opening some paths and resisting or blocking others in ways that significantly shape the final work. To elucidate this dynamic, I asked M. D. Coverley (the pen name of Marjorie Luesebrink) to describe her process of creating a digital work. Her account reveals the push-and-pull of software as literary influence (private email January 20, 2018).

Coverley took as her example a work-in-progress, Pacific Surfliner, inspired by the train that travels to and from San Luis Obispo to Los Angeles to San Diego. She says that she starts “with an idea—very rough, no text except perhaps a title or a paragraph.” For this work, she wanted to include videos of the views from the train windows. “In the case of Pacific Surfliner, I decided to use a simple Roxio video-editing program. It outputs mp3 or mp4 files.” The advantage of simplicity, however, is offset by excessive loading times, a strong negative for digital writers who want to keep users engaged: too long a wait, and they are likely to click elsewhere. The solution, she writes, “was to let Vimeo do the compression—but then I had to figure out how to get a Vimeo file to play on my designated HTML page.” Note that these negotiations with the software packages precede actual composition practices and definitively shape how it will evolve. “These decisions,” Coverley acknowledges, “have already constrained many elements of the message.”

Once she has decided on the software, then comes a period of composition, revision, and exploration of the moving images she wants to use. “For a long stretch, I will arrange and rearrange, crop and edit, expand some ideas, junk others, maybe start over several times… I did about 36 versions of the video [for Pacific Surfliner] and that is about standard.” It is significant that the images and sounds, rather than words, come first in her compositional practices, perhaps because the archive of images and sounds is constrained relative to the possibility space of verbal expression, which is essentially infinite. So software first to make sure the project is feasible, then images and sounds, and only then verbal language. “Once all the other elements are in place—I can see how economical I can be with the prose. If something is already evident in the images, sound, videos, etc. then I need only refer indirectly to that detail in the actual text.”

It is remarkable that Coverley, who began as a novel writer before she turned to digital literature, not only places words last in her compositional practice, but also sees them in many ways as supplements to the non-verbal digital objects already in place. This makes her practice perhaps more akin to film and video production than to literary language, although of much smaller scope since it can be accomplished by a single creator working alone or perhaps with one or two other collaborators. She remarks, “I have always been surprised (and delighted) at how much descriptive text can be dispensed with in hypermedia narrative. This way of writing is one of the chief joys of the medium for me.” Here is influence at the most profound level, transforming her vision of how narrative works and offering new kinds of rewards that lead to further creativity and exploration. The point is not so much the influence of specific software packages and operating platforms, although these are still very significant, but rather the larger context in which she sees her work evolving and reaching audiences. To find a comparable context in conventional influence studies, we may refer to something as looming as literary canonization in Bloom’s theory, a driving motivating force that in his view propels poets onward in the hope of achieving some kind of literary immortality. (Of course, “immortality” in the digital realm is another matter entirely, beset as the field of electronic literature is with problems of platform obsolescence and media inaccessibility.) Nevertheless, the tantalizing prospect of reaching a large audience without going through conventional publishing gateways and the opportunity to experiment with multimodal compositional practices function in parallel ways to literary canonization, the golden promises that make it all seem worthwhile. And this is only possible because of networked and programmable machines. This is the large sense in which computers are our symbionts, facilitating and enabling creative practices that could not exist in their contemporary forms without them.

Computer as Co-Author

Sea and Spar Between does not invoke any form of artificial intelligence, and differs in this respect from Evolution, which does make such an invocation. Montfort and Strickland make this explicit in their comments:

//These rules [governing how the stanzas are created] are simple; there is

no elaborate AI architecture

//or learned statistical process at work here.

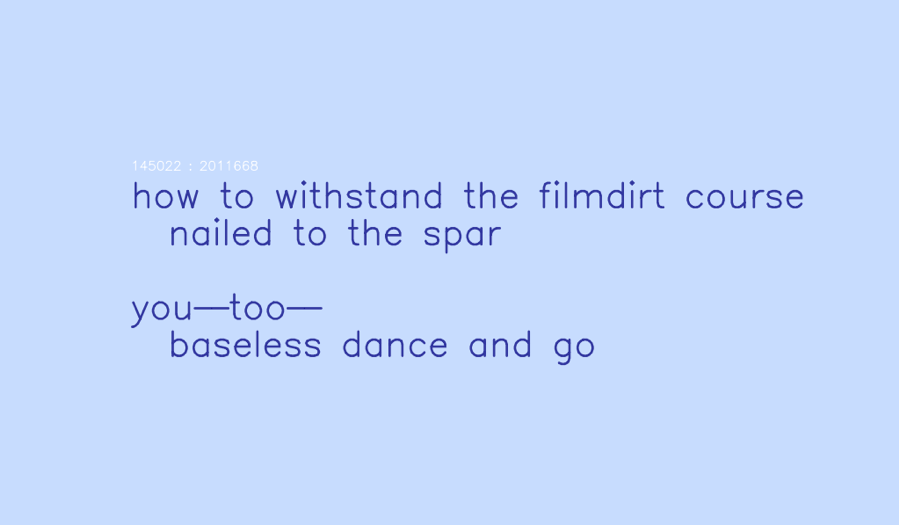

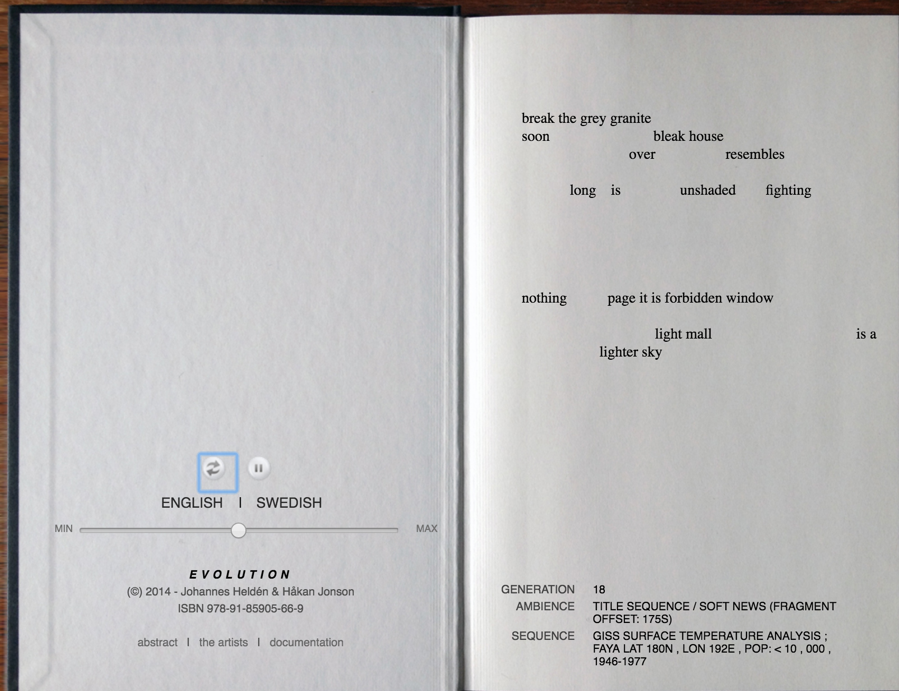

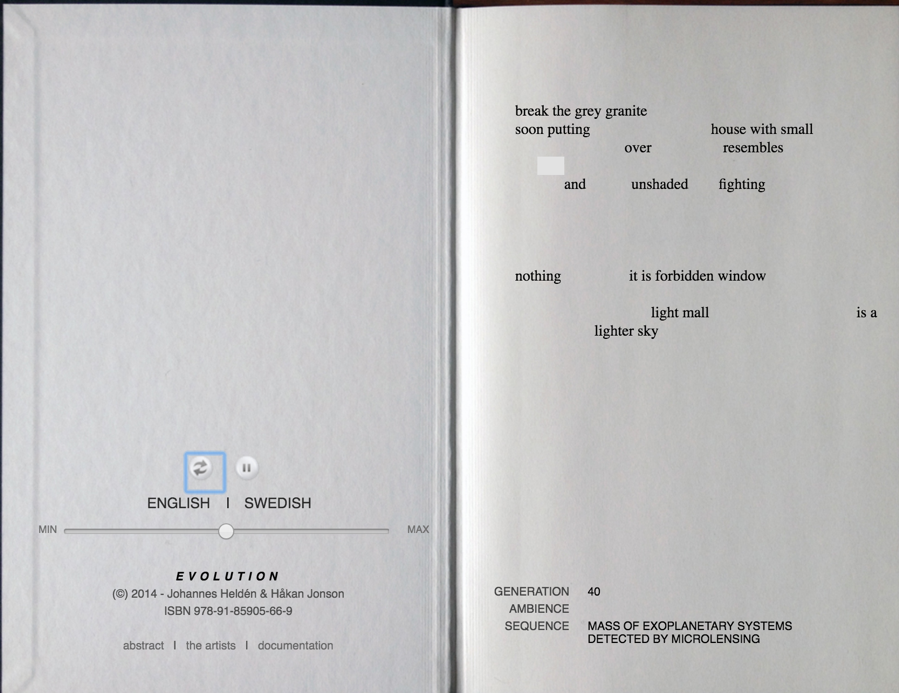

By contrast Evolution, a collaborative work by poet Johannes Heldén and Håkan Jonson, takes the computer’s role one step further, from collaborator to co-creator, or better perhaps poetic rival, programmed to erase and overwrite the words of the Heldén’s original. Heldén is a true polymath, not only writing poetry but also creating visual art, sculpture, and sound art. His books of poetry often contain images, and his exhibitions showcase his work in all these different media. Jonson, a computer programmer by day, also creates visual and sound art, and their collaboration on Evolution reflects the multiple talents of both authors.

The authors write in a preface that the “ultimate goal” Is to pass “‘The Imitation Game’ as proposed by Alan Turing in 1951… when new poetry that resembles the work of the original author is created or presented through an algorithm, is it possible to make the distinction between ‘author’ and ‘programmer’?” (Evolution 2013).

These questions, ontological as well as conceptual, are better understood when framed by the actual workings of the program. In the 2013 version, the authors input into a database all ten of the then-extant print books of Hélden’s poetry. A stochastic model of this textual corpus was created using a statistical model known as a Markov Chain (and the corresponding Markov Decision Process), a discrete state process that moves randomly step-wise through the data, with each next step depending only on the present state and not on any previous ones.

This was coupled with genetic algorithms that work on an evolutionary model. At each generation, a family of algorithms (“children” of the previous generation) is created by introducing variations through a random “seed.” These are then evaluated according to some fitness criteria, and one is selected as the most “fit.” In this case, the fitness criteria are based on elements of Heldén’s style; the idea is to select the “child” algorithm whose output most closely matches Heldén’s own poetic practices. Then this algorithm’s output is used to modify the text, either replacing a word (or words) or changing how a block of white space functions, for example, putting a word where there was white space originally (all the white spaces, coded as individual “letters” through their spatial coordinates on the page, are represented in the database as signifying elements).

The interface presents as a opened book, with light grey background and black font. On the left side is a choice between English and Swedish and a slider controlling how fast the text will evolve. On the right side is the text, with words and white spaces arranged as if on a print page. As the user watches, the text changes and evolves; a small white rectangle flashes to indicate spaces undergoing mutation (which might otherwise be invisible if replaced by another space). Each time the program is opened, one of Heldén’s poems is randomly chosen as a starting point, and the display begins after a few hundred iterations have already happened (the authors thought this would be more interesting than starting at the beginning). At the bottom of the “page” the number of the generation is displayed (starting from zero, disregarding the previous iterations that have already happened).

Also displayed is the dataset used to construct the random seed. The dataset changes with each generation, and a total of eighteen different datasets are used, ranging from “mass of exoplanetary systems detected by imaging,” to “GISS surface temperature” for a specific latitude/longitude and range of dates, to “cups of coffee per episode of Twin Peaks” (Evolution 2014, np). These playful selections mix cultural artifacts with terrestrial environmental parameters with astronomical data, suggesting that the evolutionary process can be located within widely varying contexts. The work’s audio, experienced as a continuous and slightly varying drone, is generated in real time from sound pieces that Heldén previously composed. From this dataset, one-minute audio chunks are randomly selected and mixed in using cross-fade, which creates an ambient soundtrack unique for each view (“The Algorithm,” Evolution 2014, np).

The text will continue to evolve as long as the user keeps the screen open, with no necessary end point or teleology, only the continuing replacement of Heldén’s words with those of the algorithm. One could theoretically reach a point where all of Heldén’s original words have been replaced, in which case the program would continue to evolve its own constructions in exactly the same way as it had operated on Heldén’s words/spaces.

In addition to being available online, the work is also represented by a limited edition print book, in which all the code is printed out (Evolution 2014). The book also has appendices containing brief commentaries by well-known critics, including John Cayley, Maria Engberg, and Jesper Olsson. Cayley seems (consciously or unconsciously) to be influenced by the ever-evolving work, adopting a style that evolves through restatements with slight variations (Appendix 2: “Breath,” np). For example, he suggests the work is “an extension of his [Heldén’s] field of poetic life, his articulated breath, manifest as graphically represented linguistic idealities, fragments from poetic compositions, I assume, that were previously composed by privileged human processes proceeding through the mind and body of Heldén and passing out of him in a practice of writing… . I might be concerned, troubled because I am troubled philosophically by the ontology … the problematic being… of linguistic artifacts that are generated by compositional process such that they may never actually be—or never be able to be … read by any human having the mind and body to read them.” “Mind and body” repeats, as do “composed/composition,” “troubled,” and “never actually be/never be able,” but each time in a new context that slightly alters the meaning. When Cayley speaks of being “troubled,” he refers to one of the crucial differences in embodiment between human and machine: whereas the human needs to sleep, eat, visit the bathroom, the machine can continue indefinitely, not having the same kind of “mind and body” as human writers or readers. The sense of excess, of exponentially larger processes than human minds and bodies can contain, recalls the excess of Sea and Spar Between and gestures toward the new scales possible when computational media become co-creators.

Maria Engberg, in “Appendix 3: Chance Operations,” parallels Evolution to the Cageian aesthetic mentioned earlier. She quotes Cayley’s emphasis process over object. “‘What if we shift our attention,’” Cayley writes, “‘decidedly to practices, processes, procedures—towards ways of writing and reading rather than dwelling on either textual artifacts themselves (even time-based literary objects) or the concept underpinning objects-as-artifacts?’” (Evolution 2014, np). In this instance, the concept underpinning the object is itself a series of endless processes, displacing, mutating, evolving, so the distinction between concept and process becomes blurred, if not altogether deconstructed.

Jesper Olsson, in “Appendix 4: We Have to Trust the Machine,” also sees an analogy in Cage’s work, commenting “It was not the poet expressing himself. He was at best a medium for something else.” What is this “something else” if not machinic intelligence struggling to enact evolutionary processes so that it can write like Heldén, albeit without the “mind and body” that produced the poetry in the first place? A disturbing analogy comes to mind: H. G. Wells’ The Island of Doctor Moreau and the half-human beasts who keep asking, “Are we not men?” In the contemporary world, the porous borderline is not between human/animal but human/machine. Olsson sees “this way of setting up rules, coding writing programs” as “an attempt to align the subject with the world, to negotiate the differences and similarities between ourselves and the objects with which we co-exist.” Machine intelligence has so completely penetrated complex human systems that it has become our “natureculture,” as Jonas Ingvarsson calls it (Appendix 5: “The Within of Things,” Evolution 2014, np). He points to this conclusion when he writes, “The signs are all over Heldén’s poetic and artistic output. Computer supported lyrics about nature and environments, graphics and audio paint urbannatural land-and soundscapes … We witness the (always already ongoing) merge of artificial and biological consciousness.” (Appendix 5, “The Within of Things,” np).

How does Heldén feel about his dis/re/placement by machinic intelligence? I had an opportunity to ask him when Danuta Fjellestad and I met Heldén, Jonson, and Jesper Olsson at a Stockholm restaurant for dinner and a demonstration of Evolution (private communication, March 16, 2018). In a comment reminiscent of Cage, he remarked that he felt “relieved,” as if a burden of subjectivity had been lifted from his shoulders. He recounted starting Evolution that afternoon and watching it for a long time. At first, he amused himself by thinking “me” or “not me” as new words appeared on screen. Soon, however, he came to feel that this was not the most interesting question he could ask; rather, he began to see that when the program was “working at its best,” its processes created new ideas, conjunctions, and insights that would not have occurred to him (this is, of course, from a human point of view, since the machine has no way to assess its productions as insights or ideas, only as more or less fit according to criteria based on Heldén’s style). That this fusion of human and machine intelligence could produce something better than either operating alone, he commented, made him feel “joyous,” as if he had helped to bring something new into the world based on his own artistic and poetic creations but also at times exceeding them. In this sense Evolution reveals the power of literature conceived as a cognitive assemblage, in which cognitions are distributed between human and technical actors, with information, interpretations and meanings circulating throughout the assemblage in all directions, outward from humans into machines, then outward from machines back to humans.

Super(human)intelligence: The Potential of Neural Nets

In several places, Heldén and Jonson describe Evolution as powered by artificial intelligence. A skeptic might respond that genetic algorithms are not intelligent at all; they know nothing about the semantics of the work and operate through procedures that are in principle relatively simple (acknowledging that the ways random “seeds” are used and fitness criteria are developed and applied in this work are far from simple, not to mention the presentation layers of code). The power of genetic algorithms derives from finding ways to incorporate evolutionary dynamics within an artificial medium, but like many evolutionary processes, they are not smart in themselves, any more than are the evolutionary strategies that animals with tiny brains like fruit flies, or no brain at all like nematode worms, have developed through natural selection. When I asked Jonson about this objection, he indicated that for him as a programmer, the important part was the more accurate description of genetic algorithms as “population-based meta-heuristic optimization algorithms” (“The Algorithm,” np). Whether this counts as “artificial intelligence” he regarded as a trivial point.

Nevertheless, to answer the skeptic, we can consider stronger forms of artificial intelligent such as recurrent neural nets. After what has been described as the “long winter” of AI when the early promise and enthusiasm of the 1950s-60s seemed to fizzle out, a leap forward occurred with the development of neural networks, which use a system of nodes communicating with each other to mimic synaptic networks in human and animal brains. Unlike earlier versions of artificial intelligence, neural networks are engineered to use recursive dynamics in processes that not only use the output of a previous trial as input for the next (that is, feedback), but in addition change the various “weights” of the nodes, resulting to changes in the structure of the network itself. This amounts to a form of learning that, unlike genetic algorithms which use random variation undirected by previous results (because they rely on Markov chains), use the results of previous iterations to change how the net functions. Neural nets are now employed in many artificial intelligence systems, including machine translations, speech recognition, computer vision, and social networks. Recurrent neural networks (RNN) are a special class of neural nets where connections between units form a directed graph along a sequence. This allows them to exhibit dynamic temporal behavior for a time sequence. Unlike feedforward neural nets, RNNs have internal memory and can use it to process inputs, which is particularly useful for tasks where the input may be unsegmented (that is, not broken into discrete units) such as face recognition and handwriting.

A stunning example of the potential of neural net architecture is AlphaGo, which recently beat the human Go champions, Lee Sedol in 2016 and Ke Jie in 2017. Go is considered more “intuitive” than chess, having exponentially more possible moves, with a possibility space vastly greater than the number of atoms in the universe (10240 moves vs. 1074 atoms). With numbers this unimaginably large, brute computational methods simply will not work—but neural nets, working iteratively through successive rounds of inputs and outputs with a hidden layer that adjusts how the connections are weighted, can learn in ways that are flexible and adaptive, much as biological brains learn.

Now DeepMind, the company that developed AlphaGo (recently acquired by Google), has developed a new version that “learns from scratch,” AlphaGoZero. AlphaGoZero combines neural net architecture with a powerful search algorithm designed to explore the Go possibility space in ways that are computationally tractable. Whereas AlphaGo was trained on many human-played games as examples, its successor uses no human input at all, starting only with the basic rules of the game. Then it plays against itself and learns strategies through trial and error. At three hours, AlphaGoZero was at the level of a beginning player, focusing on immediate advances rather than long-term strategies; at 19 hours it had advanced to an intermediate level, able to evolve and pursue long-term goals; and at 70 hours, it was playing at a superhuman level, able to beat AlphaGo 100 games to 0, and arguably becoming the best Go player on the planet.

Of course, programs like this succeed because they are specific to a narrow knowledge domain, in this case, the game of Go. All such programs, including AlphaGoZero, lack the flexibility of human cognition, able to range across multiple domains, making connections, drawing inferences, and reaching conclusions that no existing artificial intelligence program can match. The race is on, however, to develop General Artificial Intelligence (GAI), programs that have this kind of flexibility and adaptability. Many experts in AI expect this goal to be reached around mid-century, with a 90% confidence level (Bostrom 2016:23). In this case, the AI would combine the best of human intelligence with the powers of machine cognition, including vastly faster processing speeds, much greater memory storage, and the ability to operate 24/7. In this case, there is no guarantee that humans would succeed in developing constraints to keep such an intelligence confined to following human agendas and not pursuing its own desires for its own ends (Bostrom 2016; Roden 2014).

It is easy to see how this could be a scary prospect indeed, including, as Stephen Hawking has warned, the end of humanity (Cellan-Jones 2014). However, since this is an essay on literature and computational media, I want to conclude by referring to Stanislaw Lem’s playful fable about what would happen if such a superintelligence took to writing verse. In “The First Sally (A), or Trurl’s Electronic Bard” (Lem 2014), Trurl (a robot constructor who has no mean intelligence himself, although with very human flaws) builds a robot versifier several stories high. Rather than working on a previously written (human) poem, as Evolution does, Trurl re-creates the evolutionary process itself. Reasoning that the average poet carries in his head the evolutionary history of his civilization, which carries the previous civilization and so on, he simulates the entire history of intelligent life on earth from unicellular organisms up to his own culture, descendants of the preceding human civilization. Something goes wrong with the emergence of the primates, however, when a fly in the simulated ointment causes a glitch, leading not to great apes but gray drapes. Fixing this problem, Trurl succeeds in creating a multi-story robot that can only produce doggerel. Lem, ever the satirist, recounts how he finally solves the problem: “Trurl was struck by an inspiration; tossing out all the logic circuits, he replaced them with self-regulating egocentripetal narcissistors” (Lem, The Cyberiad 46).

Demonstrating the Electronic Bard for his friend (and sometimes rival constructor) Klaupacious, Trurl invites him to devise a challenge for the robot versifier. Klaupacious, wishing his friend to fail, invents a nearly impossible task: “a love poem, lyrical, pastoral, and expressed in the language of pure mathematics. Tensor algebra mainly, with a little topology and higher calculus” (Lem, The Cyberiad 52). Although Trurl objects, the versifier has already begun: “Come, let us hasten to a higher plane / Where dyads treat the fairy fields of Venn/Their indices bedecked from one to n, / commingled in an endless Markov chain!” (Lem, The Cyberiad 52). So the Markov chain surfaces again, although to be fair, it is far, far easier to imagine such a versifier in words than to create it through algorithms that actually run as computational processes!

There follow scenarios reminiscent of the predictions of those worried about superintelligence, although in a fanciful vein. The Electronic Bard crosses “lyrical swords” with all the best poets: “The machine would let each challenger recite, instantly grasp the algorithm of his verse, and use it to compose an answer in exactly the same style, only two hundred and twenty to three hundred and forty-seven times better” (Lem, The Cyberiad 54). The Electronic Bard enacts the same kind of procedure animating Evolution, but vastly accelerated, the faux precision underscoring its absurdity.

Just as critics warn that a superintelligence could outsmart any human constraints on its operation, so the Electronic Bard disarms every attempt to dismantle it with verses so compelling they overwhelm its attackers, including Trurl. The authorities are just about to bomb it into submission when “some ruler from a neighboring star system came, bought the machine and hauled it off” (Lem, The Cyberiad 56). When supernovae begin “exploding on the southern horizon,” rumors report that the ruler, “moved by some strange whim, had ordered his astroengineers to connect the electronic bard to a constellation of white supergiants, thereby transforming each line of verse into a stupendous solar prominence; thus the Greatest Poet in the Universe was able to transmit its thermonuclear creations to all the illimitable reaches of space at once” (Lem, The Cyberiad 57). The scale now so far exceeds the boundaries of (human and robot) life, however, that it paradoxically fades into insignificance: “it was all too far away to bother Trurl” (Lem, The Cyberiad 57).

We may suppose that this fanciful extrapolation of Evolution is “all too far away” to bother us, so we plunge back into our present reality when computational media are struggling merely to come close to simulating human achievements. Lem’s fable does not quite vanish altogether, however, suggesting that even the most vaulted preserve of human consciousness, sensitivity, and creativity—that is, lyrical poetry—is not necessarily exempt from machine collaboration, and yes, even competition (see Chakraborty 2018 for an analysis of a posthuman strain within the lyric). By convention, symbionts are regarded as junior partners in the relationship, like the bacteria that live in the human gut. We are now on the verge of developments that promote our computational symbionts to full partnership in our literary endeavors. The trajectory traced here through electronic literature demonstrates that the dread with which some anticipate this future has a counterforce in the creative artists and writers who see in this prospect occasions for joy and relief.

Whatever one makes of this posthuman future, it signals the end of the era when humans could regard themselves as the privileged rational beings whose divine inheritance was dominion over the earth. The complex human-technical systems that now permeate the infrastructure of developed societies point toward a humbler, more accurate picture of humans as only one kind of cognizers among many. In our planetary ecology, co-constituted by humans, nonhumans and technical devices, we are charged with the responsibility to preserve and protect the cognitive capabilities that all biological lifeforms exhibit, and to respect the material forces from which they spring. If we are to survive, so must the environments on which all cognition ultimately depends.

Works Cited

Bloom, Harold. 1973. The Anxiety of Influence: A Theory of Poetry. Oxford: Oxford University Press.

Bostrom, Nick. 2016. Superintelligence: Paths, Dangers, Strategies. Oxford: Oxford University Press.

Cayley, John. 2014. Appendix 2:“Breath,” in Evolution. Stockholm: OEI editör. N.p.

Cellan-Jones, Rory. 2014. “Stephen Hawking Warns Artificial Intelligence Could EndMankind,” BBC News, December 2. http://www.bbc.co.uk/news/technology- 30290540. Accessed March 26, 2018.

Chakraborty, Sumita. 2018. Signs of Feeling Everywhere: Lyric Poetics, Posthuman Ecologies, and Ethics in the Anthropocene. Dissertation, Emory University.

Coverley, M. D. 2018. Private Communication, January 20.

Deep Mind. 2017. AlphaGoZero: “Learning from Scratch. ”

https://deepmind.com/blog/alphago-zero-learning-scratch/. Accessed March 26, 2018.

Deleuze, Gilles and Félix Guattari. 1987. A Thousand Plateaus: Capitalism and Schizophrenia. Translated by Brian Massumi. Minneapolis: University of Minnesota Press.

Engberg, Maria. 2014. Appendix 4:“Chance Operations,” in Evolution. Stockholm: OEI editör. N.p.

Funkhouser, Christopher T. 2007. Prehistoric Digital Poetry: An Archaeology of Forms, 1959-1995. Tuscaloosa AL: University of Alabama Press.

Hayles, N. Katherine. 1994. “The Paradoxes of John Cage: Chaos, Time, and Irreversible Art” in Permission Granted: Composed in America, ed. Marjorie Perloff and Charles Junkerman. Chicago: University of Chicago Press: 226-241.

———-. 2017. Unthought: The Power of the Cognitive Nonconscious. Chicago: University of Chicago Press.

Heldén, Johannes and Håkan Jonson. 2013. Evolution. https://www.johanneshelden.com/evolution/. Accessed March 26, 2018.

———. 2014. Evolution. Stockholm: OEI editör.

———.2014. “The Algorithm,” in Evolution. Stockholm: OEI editör. N.p.

Ingvarsson, Jonas. 2014. Appendix 5:“The Within of Things,” in Evolution. Stockholm: OEI editör. N.p.

Latour, Bruno. 2007. Reassembling the Social: An Introduction to Actor-Network Theory. Oxford: Oxford University Press.

Lem, Stanislaw. 2014. “The First Sally (A), or Trurl’s Electronic Bard,” in The Cyberiad: Fables for a Cybernetic Age. New York: Penguin. Pp. 43-57.

Montfort, Nick. Nd. “Taroko Gorge.” nickm.com/taroko_gorge/. Accessed March 26, 2018.

Montfort, Nick and Stephanie Strickland. “cut to fit the toolspun course.” elmcip.net/critical-writing/cut-fit-tool-spun-course. Accessed March 26, 2018.

———-. Sea and Spar Between. nickm.com/montfort_strickland/sea_and_spar_between/. Accessed March 26, 2018.

——--. “Spars of Language Lost at Sea.” conference.eliterature.org/sites/default/files/papers/Montfort_Stric. Accessed March 26, 2018.

Moulthrop, Stuart and Dene Grigar. 2017. Traversals: The Use of Preservation for Early Electronic Writing. Cambridge: MIT Press. Kindle version.

Olsson, Jesper. 2014. Appendix 4:“We Have to Trust the Machine,” in Evolution. Stockholm: OEI editör. N.p.

Rettberg, Scott. Nd. “Tokyo Garage.” nickm.com/tokyo_garage/. Accessed March 26, 2018.

Robinson, Kim Stanley. 1997. Blue Mars. New York: Spectra.

———-. 1995. Green Mars. New York: Bantam Spectra.

———-. 1993. Red Mars. New York: Spectra.

Roden, David. 2014. Posthuman Life: Philosophy at the Edge of the Human. New York and London: Routledge.

Searle, John. 1980. “Minds, Brains, and Programs,” Behavioral and Brain Sciences 3:417-457.

Tenen, Dennis. 2017. Plain Text: The Poetics of Computation. Stanford: Stanford University Press.

Thoreau, Henry David. 1854. Walden; or, Life in the Woods. Boston: Ticknor and Fields.

Cite this article

Hayles, Katherine. "Literary Texts as Cognitive Assemblages: The Case of Electronic Literature" electronic book review, 5 August 2018, https://doi.org/10.7273/8p9a-7854